点击 论文下载

Attention Is All You Need (Transformer) 是当今深度学习初学者必读的一篇论文。

Attention Is All You Need

注意力是你所需要的一切

摘要

The dominant sequence transduction models are based on complex recurrent or convolutional neural networks that include an encoder and a decoder. The best performing models also connect the encoder and decoder through an attention mechanism. We propose a new simple network architecture, the Transformer, based solely on attention mechanisms, dispensing with recurrence and convolutions entirely. Experiments on two machine translation tasks show these models to be superior in quality while being more parallelizable and requiring significantly less time to train. Our model achieves 28.4 BLEU on the WMT 2014 Englishto-German translation task, improving over the existing best results, including ensembles, by over 2 BLEU. On the WMT 2014 English-to-French translation task, our model establishes a new single-model state-of-the-art BLEU score of 41.0 after training for 3.5 days on eight GPUs, a small fraction of the training costs of the best models from the literature.

注意力是你所需要的一切主导的序列转导模型是基于复杂的递归或卷积神经网络,包括一个编码器和一个解码器。性能最好的模型还通过注意机制将编码器和解码器连接起来。我们提出了一个新的简单的网络结构–Transformer,它只基于注意力机制,完全不需要递归和卷积。在两个机器翻译任务上的实验表明,这些模型在质量上更胜一筹,同时也更容易并行化,需要的训练时间也大大减少。我们的模型在WMT 2014英德翻译任务中达到了28.4 BLEU,比现有的最佳结果(包括合集)提高了2 BLEU以上。在WMT 2014英法翻译任务中,我们的模型在8个GPU上训练了3.5天后,建立了新的单模型最先进的BLEU得分,即41.0分,这只是文献中最佳模型的训练成本的一小部分。

1 介绍

Recurrent neural networks, long short-term memory[12] and gated recurrent[7] neural networks in particular, have been firmly established as state of the art approaches in sequence modeling and transduction problems such as language modeling and machine translation[29, 2, 5]. Numerous efforts have since continued to push the boundaries of recurrent language models and encoder-decoder architectures[31, 21, 13].

循环神经网络,特别是长短时记忆[12]和门控递归[7]神经网络,已经被牢固地确立为序列建模和转换问题的最先进方法,如语言建模和机器翻译[29, 2, 5]。此后,大量的努力继续推动着递归语言模型和编码器-解码器架构的边界[31, 21, 13]。

Recurrent models typically factor computation along the symbol positions of the input and output sequences. Aligning the positions to steps in computation time, they generate a sequence of hidden states h_t, as a function of the previous hidden state h_{t−1} and the input for position t. This inherently sequential nature precludes parallelization within training examples, which becomes critical at longer sequence lengths, as memory constraints limit batching across examples. Recent work has achieved significant improvements in computational efficiency through factorization tricks [18] and conditional computation [26], while also improving model performance in case of the latter. The fundamental constraint of sequential computation, however, remains.

循环模型通常沿着输入和输出序列的符号位置进行计算。将位置与计算时间的步骤相一致,它们产生一连串的隐藏状态 h_t,作为前一个隐藏状态h_{t−1}和位置t的输入的函数。这种固有的顺序性排除了训练实例内的并行化,这在较长的序列长度上变得至关重要,因为内存限制了跨实例的批处理。最近的工作通过因式分解技巧[18]和条件计算[26]在计算效率方面取得了重大改进,同时在后者的情况下也提高了模型性能。然而,顺序计算的基本约束仍然存在。

Attention mechanisms have become an integral part of compelling sequence modeling and transduction models in various tasks, allowing modeling of dependencies without regard to their distance in the input or output sequences [2, 16]. In all but a few cases [22], however, such attention mechanisms are used in conjunction with a recurrent network.

注意力机制已经成为各种任务中引人注目的序列建模和转导模型的一个组成部分,允许对依赖关系进行建模,而不考虑它们在输入或输出序列中的距离[2, 16]。然而,除了少数情况[22],这种注意力机制都是与循环网络一起使用的。

In this work we propose the Transformer, a model architecture eschewing recurrence and instead relying entirely on an attention mechanism to draw global dependencies between input and output. The Transformer allows for significantly more parallelization and can reach a new state of the art in translation quality after being trained for as little as twelve hours on eight P100 GPUs.

在这项工作中,我们提出了Transformer,这是一个避免循环的模型结构,而是完全依靠注意力机制来得出输入和输出之间的全局依赖关系。Transformer允许更多的并行化,并且在8个P100 GPU上经过短短12小时的训练,就可以达到翻译质量的新水平。

2 背景

The goal of reducing sequential computation also forms the foundation of the Extended Neural GPU [20], ByteNet [15] and ConvS2S [8], all of which use convolutional neural networks as basic building block, computing hidden representations in parallel for all input and output positions. In these models, the number of operations required to relate signals from two arbitrary input or output positions grows in the distance between positions, linearly for ConvS2S and logarithmically for ByteNet. This makes it more difficult to learn dependencies between distant positions [11]. In the Transformer this is reduced to a constant number of operations, albeit at the cost of reduced effective resolution due to averaging attention-weighted positions, an effect we counteract with Multi-Head Attention as described in section 3.2.

减少顺序计算的目标也构成了扩展神经GPU[20]、ByteNet[15]和ConvS2S[8]的基础,它们都使用卷积神经网络作为基本构件,对所有输入和输出位置并行计算隐藏表征。在这些模型中,将来自两个任意输入或输出位置的信号联系起来所需的操作数量随着位置之间的距离增长,对于ConvS2S是线性增长,对于ByteNet是对数增长。这使得学习遥远位置之间的依赖关系更加困难[11]。在Transformer中,这被减少到一个恒定的操作数,尽管代价是由于注意力加权位置的平均化而降低了有效的分辨率,如第3.2节所述,我们用多头注意力抵消了这种影响。

Self-attention, sometimes called intra-attention is an attention mechanism relating different positions of a single sequence in order to compute a representation of the sequence. Self-attention has been used successfully in a variety of tasks including reading comprehension, abstractive summarization, textual entailment and learning task-independent sentence representations [4, 22, 23, 19].

自注意力,有时也被称为内部注意力,是一种与单个序列的不同位置相关的注意力机制,以计算该序列的表示。自注意力已被成功地用于各种任务,包括阅读理解、抽象概括、文本连带和学习与任务无关的句子表示[4, 22, 23, 19]。

End-to-end memory networks are based on a recurrent attention mechanism instead of sequencealigned recurrence and have been shown to perform well on simple-language question answering and language modeling tasks [28].

端到端记忆网络是基于递归注意机制力,而不是序列排列的递归,并已被证明在简单语言问题回答和语言建模任务中表现良好[28]。

To the best of our knowledge, however, the Transformer is the first transduction model relying entirely on self-attention to compute representations of its input and output without using sequencealigned RNNs or convolution. In the following sections, we will describe the Transformer, motivate self-attention and discuss its advantages over models such as [14, 15] and [8].

然而,据我们所知,Transformer是第一个完全依靠自注意力来计算其输入和输出的表征而不使用序列对齐的RNN或卷积的转导模型。在下面的章节中,我们将描述Transformer,激励自注意力,并讨论它与[14, 15]和[8]等模型相比的优势。

3 模型架构

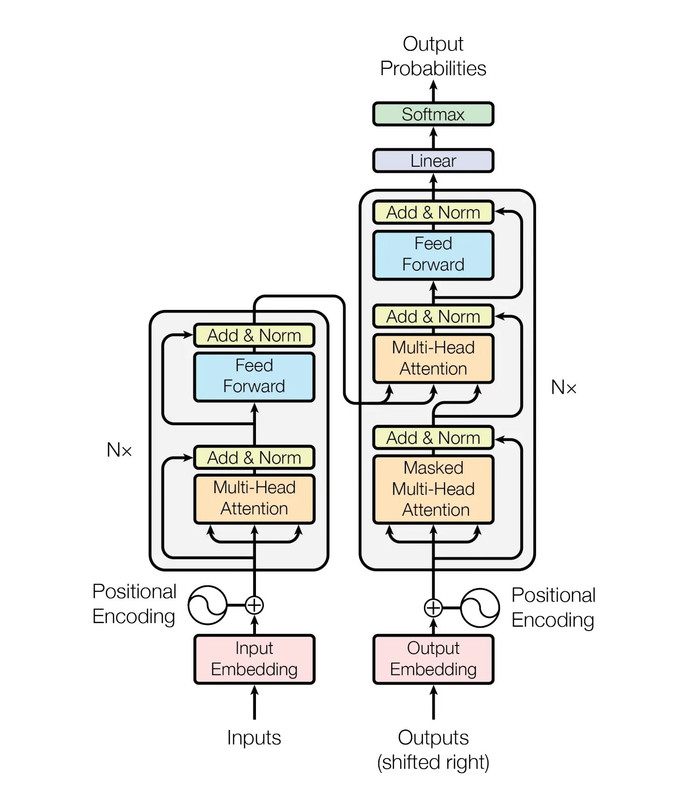

Most competitive neural sequence transduction models have an encoder-decoder structure [5, 2, 29]. Here, the encoder maps an input sequence of symbol representations(x_1, ..., x_n) to a sequence of continuous representations z=(z_1, ..., z_n). Given z, the decoder then generates an output sequence (y_1, ..., y_n) of symbols one element at a time. At each step the model is auto-regressive [9], consuming the previously generated symbols as additional input when generating the next.

大多数有竞争力的神经序列转导模型有一个编码器-解码器结构[5, 2, 29]。这里,编码器将输入的符号表示序列(x_1, ..., x_n) 映射为连续表示序列z=(z_1, ..., z_n)。给定z后,解码器产生一个输出序列 (y_1, ..., y_n) 的符号,每次一个元素。在每一步,该模型都是自动循环的[9],在生成下一步时,消耗先前生成的符号作为额外的输入。

The Transformer follows this overall architecture using stacked self-attention and point-wise, fully connected layers for both the encoder and decoder, shown in the left and right halves of Figure 1, respectively.

Transformer遵循这一整体架构,在编码器和解码器中都使用了堆叠式自注意力和点式全连接层,分别在图1的左半部和右半部显示。

参考地址:blog.csdn.net/jokerwu192/article/details/125567174

所示图详解:www.jianshu.com/p/24f1624affe3